- DO WE NEED TO INSTALL APACHE SPARK INSTALL

- DO WE NEED TO INSTALL APACHE SPARK SOFTWARE

- DO WE NEED TO INSTALL APACHE SPARK OFFLINE

- DO WE NEED TO INSTALL APACHE SPARK SERIES

- DO WE NEED TO INSTALL APACHE SPARK DOWNLOAD

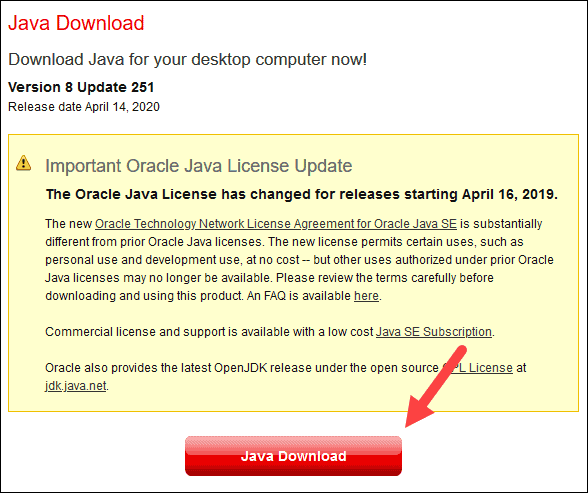

Then automatically new tab will be opened in the browser and then you will see something like this. Run below command to start a Jupyter notebook. It’s time to write our first program using pyspark in a Jupyter notebook.

DO WE NEED TO INSTALL APACHE SPARK INSTALL

#If you are using python2 then use `pip install findspark` In our case, we want to run through Jupyter and it had to find the spark based on our SPARK_HOME so we need to install findspark pacakge. If you want to run pyspark shell then add below line too. Open .bash_profile using command vi ~/.bash_profileĪdd below line export SPARK_HOME=/usr/local/lib/python3.7/site-packages/pyspark Now we need to set SPARK_HOME environment variable. So, SPARK_HOME will be /usr/local/lib/python3.7/site-packages/pyspark. So it means pyspark installed at /usr/local/lib/python3.7/site-packages. Location: /usr/local/lib/python3.7/site-packages You will get output like this Name: pyspark #If you are using python2 then use `pip install jupyter`įirst, we need to know where pyspark package installed so run below command to find out #If you are using python2 then use `pip show pyspark` If you are going to use Spark means you will play a lot of operations/trails with data so it makes sense to do those using Jupyter notebook. Successfully installed py4j-0.10.7 pyspark-2.3.1Īlmost there. Installing collected packages: py4j, pyspark Running setup.py bdist_wheel for pyspark. #If you are using python2 then use `pip install pyspark` Now the pyspark package is available so no need to worry about all those.

DO WE NEED TO INSTALL APACHE SPARK DOWNLOAD

Previously we need to download Spark from Spark site and extract it and do the stuff.

DO WE NEED TO INSTALL APACHE SPARK SOFTWARE

If you are like wanting to work with the latest software then you love to work in python3. Java HotSpot(TM) 64-Bit Server VM (build 25.102-b14, mixed mode)īy default, you will have python. Java(TM) SE Runtime Environment (build 1.8.0_102-b14)

You will get output like this java version "1.8.0_102" To check whether java installed correctly or not just run below command. If you want to install Java8 then run below commands. Spark is implemented on Hadoop/HDFS and written mostly in Scala, a functional programming language which runs on the JVM. I hope you have Homebrew installed in your mac if not follow this link. I am using a Mac machine, so setup steps related to Mac. I realized its time to meet my future love Spark. We can do a couple of optimizations but we know those are temporary fixes. So if we want to share something important to any broad segment users our application goes out of memory because of several reasons like RAM, large object space limit & etc. More than 1 million users visit our company’s Indian cars websites, mobile sites & Apps every day. So, every time based on conditions we need to get the users belongs to those partitions and do UNION if OR condition, INTERSECTION if its AND condition.

DO WE NEED TO INSTALL APACHE SPARK SERIES

We are using Cassandra database because our application is write heavy & its time series data. This example segment contains 3 conditions.

So, in this case, we need to get segmented users. Let’s say we got a good offer for Maruti Swift car in Hyderabad city we need to send a notification to the users who visited our Web Site or Mobile Site or App last week and did research related to Maruti Swift car and they are from Hyderabad city. So we need to share relevant offer to the user. If we share all offers its literally spamming. Offers differ from city to city, car model to model & it has validity too. Our data team constantly searches for good offers related to Cars. You may ask what will you do with the segmented users or why do you need segmentation? Let me explain with an example related to one of our company’s cool & super website CarDekho. Basically what segmentation does is shortlists the users based on conditions given in segmentation definition like user visited X page and did Y event & etc.

DO WE NEED TO INSTALL APACHE SPARK OFFLINE

We recently started offline segmentation support in our current project. I know one day I need to go for a date with Spark but somehow I was postponing for a long time, That day came I am excited about this new journey. I think almost all whoever have a relationship with Big Data will cross Spark path in one way or another way.